Why ASICS Digital Builds 12-Factor Apps with a Focus on Infrastructure

Written by

Schuyler BrownLast updated on:

May 26, 2022Reading time:

How ASICS Digital Created a Culture of You Build it, You Run it

John Noss is a Senior Site Reliability Engineer at ASICS Digital, formerly Run Keeper. In this talk, he shares how ASICS Digital builds 12-Factor apps with an emphasis on infrastructure. Listen as they walk through how and why they made a dev culture of 'You Build It, You Run It' and download the slides now.

Talk Transcript

John Noss 0:02

Thank you, I'm very happy to be here and excited to share a little bit about what we've been going through. It does include StrongDM, and it also includes a container build process. So it's mostly about how we built a 12 factor container that worked for the people that were going to use it, and some of the things that went into that. So again, I'm John Noss, I'm an SRE at ASICS Digital and excited to be here.

So a little bit of the orientation, what is ASICS Digital? We're responsible for the consumer engaging platforms for the global ASICS brands, learn more, we're hiring. These are the ASICS brands, you might have heard of like ASICS the shoes, but it's also other fitness gear, and now fitness apps through run keeper at ASICS Studio. Run keepers a GPS run tracker for your phone, tech that helps you go outside and do things. And ASICS Studio is audio workouts that you can do at home. And one ASICS is the application that I'm talking about me when I was starting this process for ASICS digital I was new to being an SRE previously had worked in high performance computing. And this was like a really cool opportunity to be like in a products company that makes things that people use. Again, these opinions are my own, not those of ASICS digital. This example one ASICS was developed by a third party. And we were onboarding it both hosting and also maintaining the application.

So what were some of the goals of this first container build process, it was going to be our first container in our infrastructure. Previously, everything was Jenkins building packer AMIs, you know, run keeper was 10 years old, it was the first running tracking app on the iPhone when the App Store came out. So the priority then features for that to end, the tech stack hadn't changed much. But for new apps, we're trying new things we want to do the containers, we want to new build processes. So that's where we came in. To build this, we wanted to be enabled the 12 factor, if you're not familiar with 12 factor, it's a really cool set of things that an application should do to work in a cloud environment, be scalable, be disposable, right logs take config from environment variables, the one we were working on from the SRE team was reuse binaries between environments. So not having any config in the in the actual binary. And we also wanted to empower developers to manage their deploy process. This was really important, we were early, early in the stages of building a culture of you, you build it, you run it, so we wanted them to be able to own their release process and have visibility into that. So what were some of the problems we were faced with at the time, so we were going to EKS didn't pull new containers that you didn't change their name. Now there's a way to force new deployments. But at the time, you had to change the name to get a new container. We also had two separate repositories that had grown up around this application. One was the Terraform code the SRE team was writing. And the other was the application code that we taken over from this vendor. Both of them are building in CircleCI, we needed some way to trigger them, we needed some way to get build status back. So we've got some new solutions for this.

John Noss 2:56

There was also a little bit of config that still came in got baked into the environments. That was that collaboration with the development team to work those out. And then this is both good and bad that circleci is not Jenkins, I'm sure some people prefer Jenkins, some, but circleci has some new cool features deploys from GitHub tags and branches. But you lose some things that you had in Jenkins, there's no drop down to pick which builds a deploy. So we had to find a way to work around that. So what were some of the options we were faced with EKS hadn't come out yet. We decided at the time, we didn't want to roll our own Kubernetes.

So it was going to be ECS. But fortunately, some of my co workers at ASICS Digital had already written terraforming modules, there's the ECS cluster, one is on the community modules upstream. And that deploys your actual EC2 auto scaling group that's going to be ECS clustered. So it sort of like puts the magic back into this. And then the ECS service is how do you actually deploy an application and a little bit on nomenclature, because I'm probably going to call these things spines and spikes. And you might not know what those are unless you watch Kids TV shows and read our reference documentation.

So the spine is the shared infrastructure that every application uses. It's the shared ECS cluster, it's the Route 53 zones. It's the ACM certs it's it's all those things that are shared across tiers and applications. And then a spike sort of comes off of that spine. And those are where an application lives, which has its ECS service has its routed three records, and it looks up with data resources in Terraform for where to put those things, links on our model, and links to the charity majors post on Terraform, which are super fantastic. And early link for our new pattern doing what we're calling it moto spike.

I'll talk more about in a little bit more options, word pulling from tags or branches, because circleci could work from either, which did the developers want which ones were good ideas, and how to redeploy the same binary. So I'll dig in to a little bit of a solution that we came up with. This one was easy. Deploy just changed the name, you got a new container, you got a new deployment on ECS.

But reusing binaries was a little harder, because we didn't have a drop down menu in Jenkins anymore. So what I ended up doing is writing a script that would scan back in time to find the most recent commit that wasn't a fast forward merge. And I have some diagrams that will make a little bit more sense of this. So here, developers made the blue branch of the feature branch, they've deployed it to dev from a tag that can deploy again from a tag. And then when they merge it back to Master Master does a build of the application. Later, when that developer or QA person on the team releases it to staging, all they do is a PR for master to staging release, and magic happens. The script goes back in time sees that that first commit was emerged commit didn't have any deaths was a festival remerge. So it uses the build that was already built on master.

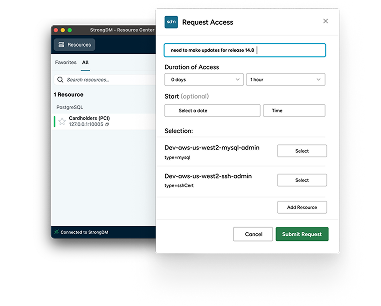

So this means all you have to do is pull a container, re tag it and you're off to the races, you don't need to do a rebuild again, and no build time issues can come up. Same thing with production runs the same script finds the same commit back on master. So when you get to production, you've already tested that binary, the exact same thing that's running in production, you've already tested it. I think I'm going to skip through this pretty quickly. But you can look it up. This is some of the magic about how it works. Find out how many parents that commit had, here's finding out where there are any merge conflicts like check that diff if there's stuff in there, I'm sure there's better ways to do this. But this works for us. And this is back to how developers trade the dev environment. Because when you're deploying from tags, any feature branch could be deployed to tag it anytime. This doesn't handle schema migrations. So developers are using StrongDM not a paid shill.

But we really like it because they can connect straight to their databases that they need to make whatever changes they want. And whenever a developer is done with their environment, they can finish the pulling that one interesting thing that came out of this is I live in AWS all the time. So for me to check what's on the environment, I just go check the test definition. But the developers didn't have that.

So what they ended up using was there slack topic, when someone did a dev deploy, they would go and change their slack topic to be my name this time. So if an hour or two later or the next day, somebody else wanted to use that AWS dev environment, they just go check the topic, and they can see who to ask, Hey, are you done with that. So bit of the, you know, would have been nice to automate that. But there was a workaround.

Here's a bug discovered, make a new branch merge back, it's a little bit like get flow, but it's different than branch for release. Because it's one long running release branch, I'm sure there's other variations of this. This is the pattern that we came up with. And one thing that I personally really liked about this process is when you do a PR to the production release branch, you've got one last chance to confirm this is the code that I'm expecting to see go live. When you're in the GitHub UI for tags for a tag release, you don't actually see what code is going live, you know, you're putting a tag at the head of something, and you can diff against where your old tag was, but it's a little bit more opaque. With the PR, it's much more clear. This is what's going live, I'm clicking the button now.

Another thing we really like is that there's no magic in Jenkins, all the building deploy is in circleci, configs gamble. Or as the developers actually took the lead because this code was in the the application repo, they factored that out in the helper scripts. So it's in a better place with this collaboration. And that's what we wanted for the development team to be able to manage that application that that release process.

Another really important part was collaborating closely with the dev team. We'd sort of been working in independently, the app team working on our features in the sorry, team building out the Terraform, but it became clear that we are giving the same updates in two different stand ups. So I would say one thing, and then somebody else would say something An hour later, so we just went to the same stand up in the run up to migration, you know, I sat near their desk, so they need a cloud one of validation, I can just help with that, you know, made things much faster with that collaboration.

So I'm going to talk a little bit about secrets management in circleci. Through vault we get one time AWS credentials. We have some later applications that you more things. But this is one thing that's in this one. And a note on deploying console and vault we have them in ECS. So my coworkers today, ASICS Digital wrote these modules that deploy console and vault on top of ECS. So this is where you're in AWS, you're in ECS and you want console involved. This is the magic that makes it happen.

And they did a workshop at DevOps days in Boston last year. The other thing we wanted out of this process was one ASICS was our first application that was going to be in a container, we needed a process, we needed some way to deploy it. But then we're going to have other applications that were also containers, we needed to iterate on the process and use what we learned to make it better for that next version. You know, it's sort of like started the process.

And we've made improvements at each step. So stepping into a little bit of a retrospective. How did we do at meeting our goals with this? Well, we did create a container build and deploy pipeline, and it does successfully build and deploy containers. So we check that one off. Does it reuse miners between environments? Yes, it does. So all config comes in from in for the same binary production is being tested. And one side note on this, it actually extends all the way to local Dev, where developers are running Docker on their laptops. So they're as close to production as possible, the same container mean, it's a little bit different on local because we're running a Postgres container, but on AWS, it's an RDS. But the container for the application is the same. So we really like that part.

And then empowering developers to manage their deploy process. This is something that is a work in progress developers own their their release process, they are doing the releases themselves, no intervention from SRE, the code that manages their deploy processes in their application repo. So if they want to change how the tests work, they just can make a PR to their own app code repo and change how the tests work. But then some other things like if there's a change in Teraform, or a big change the process to a different kind of release model. SRE is going to be involved in that. And that's, that's what we'd hope is just to be a partner with with that.

So on secrets management, we've made a lot of progress on newer applications, getting application secrets from vault using IM all this is one cool thing you get from vault is you can have AWS be the trusted third party. So you don't have to put any secrets in your application. No secret Nope, not not even like a secret to get the vault because AWS can vouch for your application, and do the IAM role to get there. And then you use end console at that as the entry point. This came up, I was talking to someone earlier, it's kind of badly named that it's called end console. It also works for vault. And the same with console template,

Console template on will write out a config file, and then re help your application use your secrets change. And you can also do database credentials on dynamically. So you you talk to vault at what looks like a static endpoint. But it's really a dynamic, create a database connection for you on the fly. And then I mentioned this, this pattern of two repos, one that was terraforming one that was application, we've got a new version of that we're calling it mono spike, there's a reference version of it that's up. This is sort of a play on mono repo where all your code is in one place, and spike, which was what we call applications. So mono spike is application code and Tara form code in the same repo. So what circleci does is it does a Docker build, and then it runs Teraform to do the deploy, depending on what tag you pushed. So if you push Master, you just got to build and tests, you push out deploy dev tag, you get that same build, and it deploys to the dev environment. And then same, it's other tags for staging and production.

But now, to sort of dig a little deeper into the retrospective, there are some things I wished I'd asked more about sooner. You know, it made sense to deploy feature branches from tags for Dev. And it made sense to me to deploy from prs for staging and prod. But what does that actually mean, for developers, if you're making a change, you might have to make a lot of prs to get it through your help, or Teraform repo and your application repo. So there were some pain points we worked out there. And the other requirement when I was building, this process was all builds up tester on staging. So there's a requirement in this code base that says, before you deploy to production, you must deploy to staging.

Now, developers always want a hot fix. So some of our newer applications have a hot fix that doesn't go through staging. And it's a little bit faster. And you know, where does that fit in with the products as this build process? How do we backward those changes into the earlier applications, and then this one about identifying who was using the environment, that wasn't something that even occurred to me because I need tools that show me that didn't realize that the developers didn't see those tools. So came up with a new way, you know, we could automate, posting that slack topic or something like that.

And the general category for this is product management, for infrastructure things. It's not just for feature things. There's been a couple really cool talks recently, if you want to learn more, I'd highly recommend checking these out. Jen Woolner, at SRE Con and Maggie Stratton, at Boston's DevOps days, this was a lot on how do you do product management, when you don't have a product manager? You're sorry. But you can still do user interviews, you can still do feature sprint, you can still work on these things. And then this is that that goal? Like how do you learn things? Whenever you do it that multiple three or three times of automating it now how do you pull that back?

Go back to your earlier application that is working and make these changes to bring some of the new things that you've learned? So what's the bigger idea? Each new build and deploy pipeline that you build is an opportunity to learn brings new improvements, and that back porting is important. You know, if you're building something, think what you change how we've evolved to the model spike pattern, and continue to get feedback.

Next, the product management even for infrastructure is really important. You know, you want to ask your developers that are your customers, what do you want out of this tool? What is it pain point that you're seeing, not just what is the pain point that I'm seeing as the SRE rebuilding that that tool. And then the end goal is to increase developer velocity, sorry, if that's a buzzword, but that's the goal is to make these builds and deploys go faster, more straightforward. You know, we're still not 100% satisfied with our workflow, ECS scheduler has some delays. But I think given the same inputs, we'd probably come up with a system very similar to this, that lets developers deploy straight out of GitHub, from tags from branches with the magic to deploy the right things.

And going forward, we're looking at other tooling with the goal of enabling developers to describe, build, deploy, and run their applications with little involvement from SRE will be there for guidance for patterns for tooling for code reviews on teraform, but really, we're trying to enable developers to run their applications, you know, as as they want to. They're holding the pager so they should be running it.

Thank you.

Next Steps

StrongDM unifies access management across databases, servers, clusters, and more—for IT, security, and DevOps teams.

- Learn how StrongDM works

- Book a personalized demo

- Watch a StrongDM walkthrough

Categories:

About the Author

Schuyler Brown, Chairman of the Board, began working with startups as one of the first employees at Cross Commerce Media. Since then, he has worked at the venture capital firms DFJ Gotham and High Peaks Venture Partners. He is also the host of Founders@Fail and author of Inc.com's "Failing Forward" column, where he interviews veteran entrepreneurs about the bumps, bruises, and reality of life in the startup trenches. His leadership philosophy: be humble enough to realize you don’t know everything and curious enough to want to learn more. He holds a B.A. and M.B.A. from Columbia University. To contact Schuyler, visit him on LinkedIn.

You May Also Like